Adapting In a large open space by James Tenney for Unity Game Engine

This tutorial has been prepared to offer an example for the first submission on the course Interactive Sound Environments. It’s main aims are to;

- Bottom out challenges where sound parameters can be set and controlled with C# scripts

- Setup prefabs that can be spawned and destroyed based on our own criteria (again, setup in script)

- Introduce insertion of custom DSP code within the Unity game Engine via Faust

Rationale

In order to give yourself a specific task, with defined and achievable elements, it’s no bad idea to start learning Unity by trying to realise or adapt an existing idea or score.

For this tutorial, I decided to explore James Tenney’s In a wide open space Tenney (1994). The piece has a very clear set of instructions that are easy to imagine being translatable to different circumstances and contexts. In a wide open space is therefore an ideal project to port into a Unity context; there is some simple math involved to control pitch; there is the expectation that the experience will be three dimensional (the listener walks around a large space) and the control of timbre and colour offers scope to develop sound elements to a very high/deep level if you want to go there once a basic implementation of Tenney’s score is in-place.

A score of the piece is available here: http://www.frogpeak.org/unbound/tenney/InALargeOpenSpace.pdf

Challenge 1 - work out the pitch schema

You can see on the score the pitches that are expected. Can you work out how to derive each pitch mathematically? It is very simple, so don’t work too hard to get the answer. Essentially, you’ll need to find the frequencies that are equivalent to each pitch in the score. Clue: if you know the frequency of the lowest note, the rest should be very easy to sort out.

Ok, now you know the math, can you formulate a script that will perform that math for you? It might be good to be able to set the fundamental with a public float….

Finally, can you randomise which frequency might be selected On Start?

Challenge 2 - build a world

The score says that the listener needs to be able to move around a space so you’re going to need a FirstPersonController Prefab, a floor on which to walk and to be sure that the first person controller is able to hear sound. You’ll need to be sure that there is a listener on the FPC.

Challenge 3 - make a prefab that’s going to hold an audio asset

To do this, you’ll need to make a Prefabs folder. Next, you’ll need to create a game object, make a cube and give it a name. Drag the cube into the Prefabs folder that you just made and add an Audio Source component.

Challenge 4 - get a sound synthesis source for Unity

You can follow this and make an oscillator in C# if you like:

However, I have found it valuable to explore Faust’s online IDE NOTE, USE CHROME FOR THIS. Here’s some code to build a simple sinewave oscillator that will become surround pannable within Unity.

declare name "osci";

declare version "1.0";

declare author "PARKER";

declare license "BSD";

declare copyright "(c)GRAME 2009";

//-----------------------------------------------

// Sinusoidal Oscillator

// (with linear interpolation)

//-----------------------------------------------

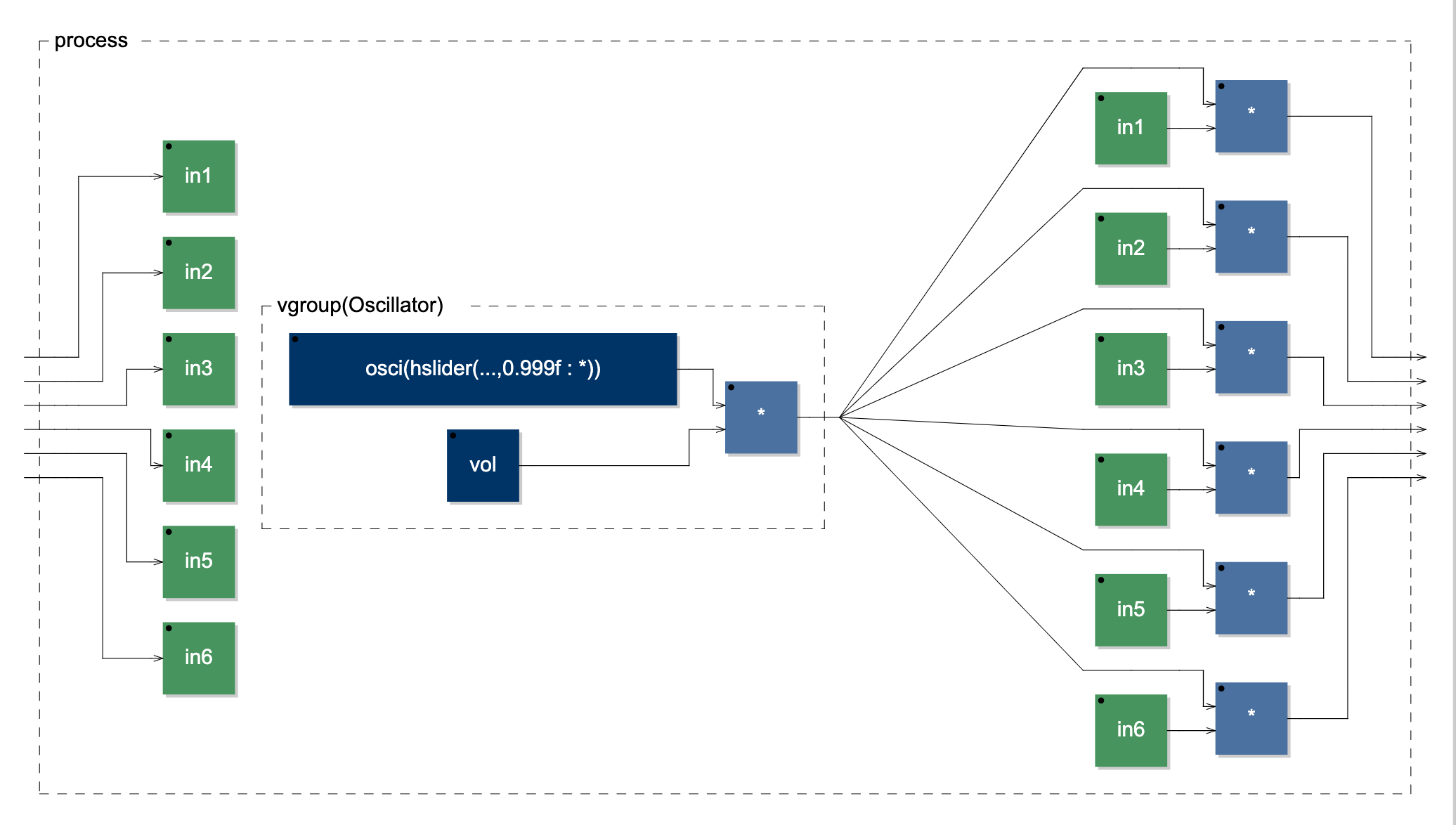

// modification by mparker to make this plugin take audio inputs and multiply the audio input by the output of the oscillator. This has been done to ensure that Unity's spatialisation parameters get multiplied by the source, otherwise, the plugin won't spatialise in unity. You'll notice that's it's 5.1 ready with 6 channels of input and output.

import("stdfaust.lib");

vol = hslider("volume [unit:dB]", -24, -96, 0, 0.1) : ba.db2linear : si.smoo ;

freq = hslider("freq [unit:Hz]", 1000, 20, 24000, 1) : si.smoo ;

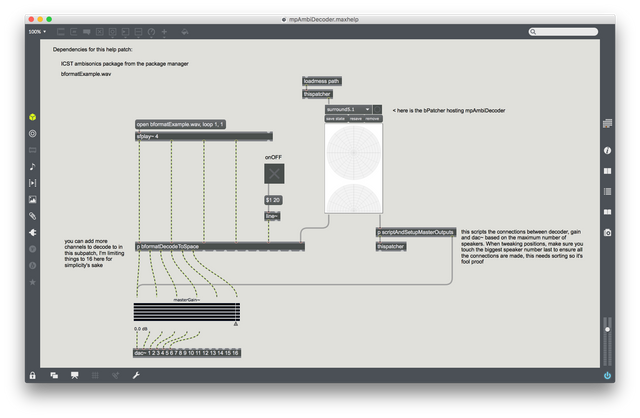

process(in1,in2,in3,in4,in5,in6) = vgroup("Oscillator", os.osci(freq) * vol) <: (_*in1,_*in2,_*in3,_*in4,_*in5,_*in6);If you look at the DSP diagram, it may be easier to see what we need to do to with custom DSP within Unity in order for it to be able to pan that DSP within Unity’s own spatialisation universe.

You don’t need to worry about this now, but if you want to make more use of Faust, you’ll need to install a whole bunch of compiler code to port your Faust DSP to xCode commandline tools, Android SDK and NDK, mingw-64. These will allow you to compile your custom DSP code to any of the possible output formats.

I have compiled the above code, and a stereo version for this workshop so you can download these here:

Download something that seems relevant and install the package inside Unity.

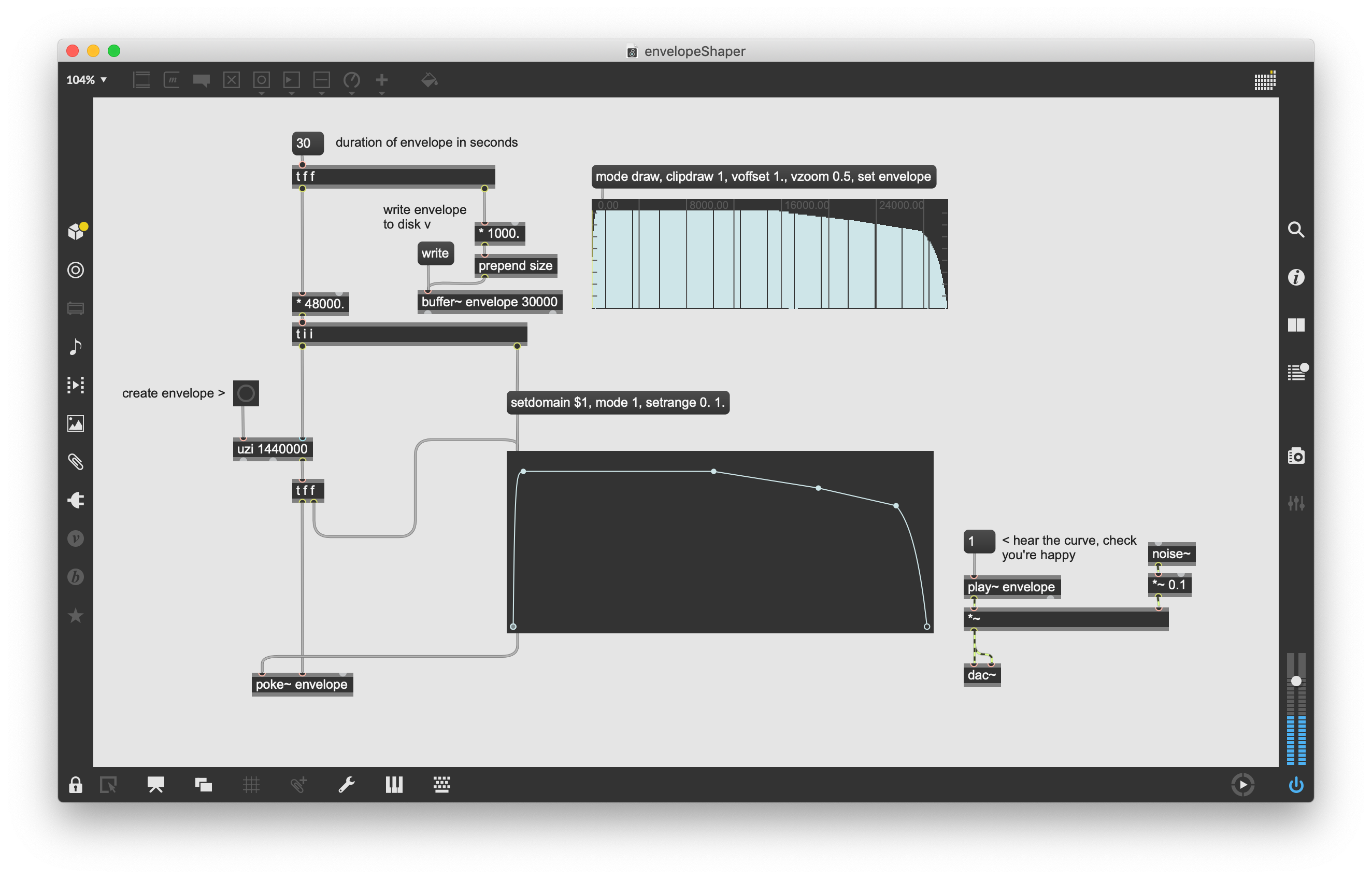

Challenge 4.5 make an envelope

This envelope is important. It will allow Unity’s spatial audio parameters to be multiplied by the synthesis source. It will also give us a 30 second sound file that we can play back in the audio source and shape the amplitude of the Faust DSP over time.

A final and potentially very powerful advantage here is that you can change the pitch of playback of the audioSource and this will change the duration of the envelope without affecting the pitch of the synthesis DSP. This means you could quite easily and cleverly generate rhythmical material by controlling the pitch of the audioSource and having a range of different envelopes on different prefabs. Some prefabs could be very short and sharp, others more pad-like….

Challenge 5 add the DSP to your prefab

If your package installs properly, then you’ll be able to drop the script that begins with the name FaustPlugin onto your gameobject prefab. This will automatically setup an audiosource on the prefab.

Challenge 6 spawn your prefab based on some time parameters

Make an empty gameObject called spawnMonger. This will host the following script.

Challenge 7 - kill your Prefabs

Add this to your prefab, it should kill the oscillator once the envelope has finished playing. Notice that the envelope’s pitch is changed, therefore affecting the duration of the lifetime of the prefab. When the sound has finished playing the cloned Prefab is killed.

Challenge 8 Load in some audiofiles instead

This script, written by Leo Butt and slightly modified by Martin Parker can be used if you’re unable or unwilling to use the FaustPlugin that we made above. You could also make a new prefab and load this script onto it and have samples and synthesis stuff going on.

You will first need to make a Resources folder in the main Assets directory. Then make a folder inside that called samples_01. Drop in a pile of samples there.

Next, make a 3D cube game object and drop on the script below, make the cube into a prefab by dropping it into the Prefabs folder.

Remove the cube from the scene.

Add this prefab as the spawnee by dragging it across to the field in the SpawnMonger that we made.

References

Tenney, J. (1994). In a large open space (1st ed.).